Team:Heidelberg/Modeling/descriptions

From 2010.igem.org

AlejandroHD (Talk | contribs) (→Neural Network Model) |

|||

| Line 135: | Line 135: | ||

Artificial Neural Network usually called (NN), is a computational model that is inspired by the biological nervous system. The network is composed of simple elements called artificial neurons that are interconnected and operate in parallel. In most cases the NN is an adaptive system that can change its structure depending on the internal or and external information that flows into the network during the learning process. The NN can be trained to perform a particular function by adjusting the values of the connection, called weights, between the artificial neurons. Neural Networks have been employed to perform complex functions in various fields, including pattern recognition, identification, classification, speech, vision, and control systems. | Artificial Neural Network usually called (NN), is a computational model that is inspired by the biological nervous system. The network is composed of simple elements called artificial neurons that are interconnected and operate in parallel. In most cases the NN is an adaptive system that can change its structure depending on the internal or and external information that flows into the network during the learning process. The NN can be trained to perform a particular function by adjusting the values of the connection, called weights, between the artificial neurons. Neural Networks have been employed to perform complex functions in various fields, including pattern recognition, identification, classification, speech, vision, and control systems. | ||

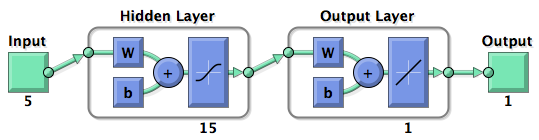

Mathematically there are three basic components that describes a single layer network: the synapses of the artificial neurons that are modeled as weights and that represent how strong is the connection between the input and an artificial neuron. An adder, that sum up all the the weighted inputs and finally an activation function, that controls the amplitude of the output of the single layer. Generally there are three type of activation function: threshold, sigmoid, piecewise linear function. For our model the sigmoid function has been used, it can range the output between 0 and 1 or between -1 and 1 {{HDref|Kröse et al, 1996}}.<br> | Mathematically there are three basic components that describes a single layer network: the synapses of the artificial neurons that are modeled as weights and that represent how strong is the connection between the input and an artificial neuron. An adder, that sum up all the the weighted inputs and finally an activation function, that controls the amplitude of the output of the single layer. Generally there are three type of activation function: threshold, sigmoid, piecewise linear function. For our model the sigmoid function has been used, it can range the output between 0 and 1 or between -1 and 1 {{HDref|Kröse et al, 1996}}.<br> | ||

| - | [[Image:NeuralNetwork_HD2010_image2.png|400px | + | <center>[[Image:NeuralNetwork_HD2010_image2.png|400px]]<br> |

| + | Figure 2: representation of the mathematical model of a biological neuron.</center><br> | ||

<br> | <br> | ||

| - | |||

| - | |||

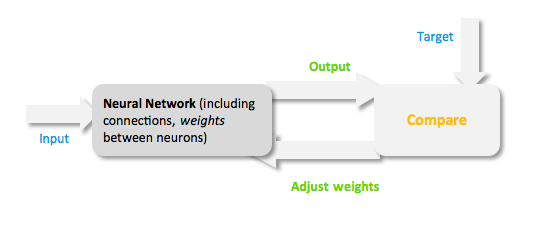

During the learning process, difference between the desired output (target) and the network output is minimised. This difference is usually called cost; the cost function is the measure of how far is the network output from the desired value. A common cost function is the mean-squared error and there are several algorithms that can be used to minimise this function. The following figure displays such a loop. | During the learning process, difference between the desired output (target) and the network output is minimised. This difference is usually called cost; the cost function is the measure of how far is the network output from the desired value. A common cost function is the mean-squared error and there are several algorithms that can be used to minimise this function. The following figure displays such a loop. | ||

| - | <center>[[Image:Neural_Network.png]]< | + | <center>[[Image:Neural_Network.png]]<br> |

| - | + | Figure 3: Training of a Neural Network.</center> | |

| - | Figure 3: Training of a Neural Network. | + | |

===Model description=== | ===Model description=== | ||

Revision as of 01:56, 28 October 2010

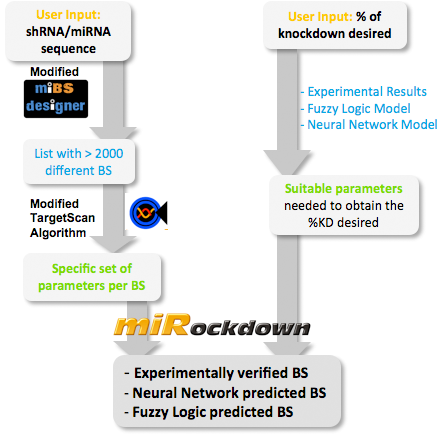

miBEAT:miBEAT (miRNA Binding site Engineering and Assembly Tool) is a graphical user interface that has as its back-end a compilation of multiple individual models and scripts which interact with each other to generate constructs. miRockdownThere is an urgent need for an easy-to-use tool that generates a binding site the user can use to modify protein levels. Several tools can predict mRNA knockdown, but our approach aims to the final objective: protein levels (specially for medical applications like gene therapy). How to use miRockdownRight from the beginning of our modeling project, we knew we would have to integrate our trained models into an online GUI. We made it in the most user-friendly way we could think of: The user only needs to input the desired knockdown percentage (kd%) and choose an sh/miRNA sequence, to get a binding site that satisfies their needs.  Overview of the miRockdown script flow. The knockdown percentage (kd%) input invokes the selection of the appropriate experimental BS or theoretical binding site parameters. The miRNA sequence starts the generation of BS sequences. Subsequently, these BS sequences are characterized by a modified TargetScan algorithm and finally the parameters of the theoretical BS are compared with the parameters of the generated BSs and the closest of the generated BSs is given as output.

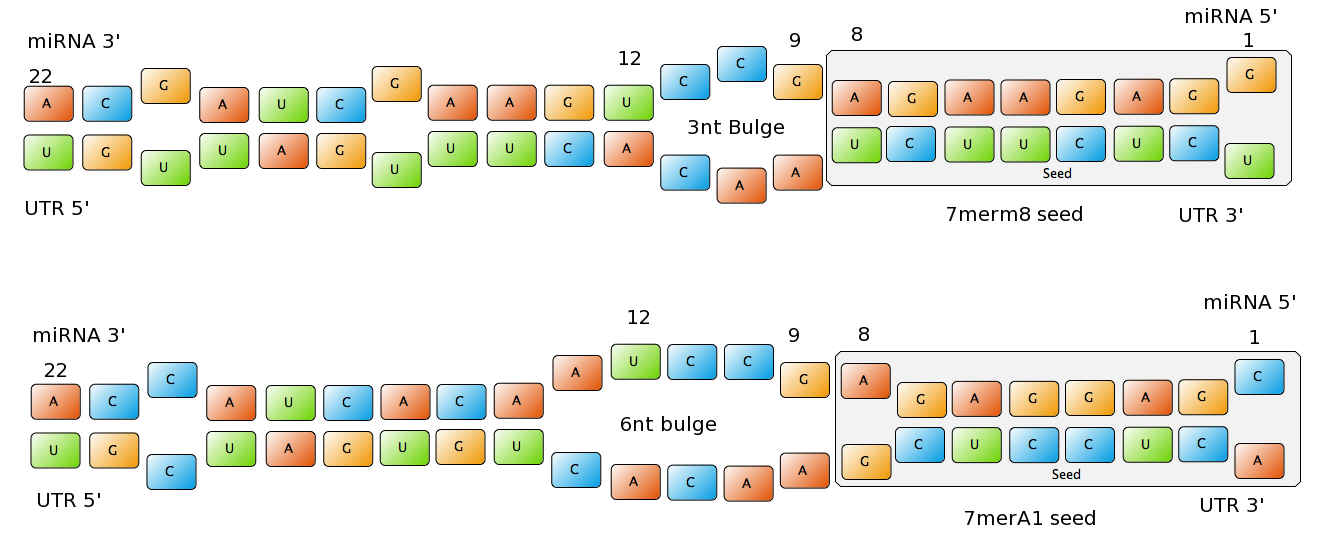

miBSdesignerHaving a binding site designer was crucial to complete the computational approach to our project: miBSdesigner is an easy-to-use application to create in silico binding sites for any given miRNA. Using our device, the user will be able to generate binding sites with several different properties. InputThe user has to input a name for the miRNA to name the primers. The miRNA sequence must be 22 nucleotides long and has to be input in direction 5’ to 3’ (both DNA and RNA sequences are admitted and any extra characters will be removed from the sequence). The user can also enter a spacer inert sequence if he needs to place the binding site further along in the 3’UTR region (it is recommended that the binding site is at least 15 nucleotides away from the stop codon). Initially the user can choose between a perfect binding site (matching the 22 nucleotides), or an almost perfect binding site (matching all of the nucleotides, but leaving a 4-nucleotide bulge between 9 and 12. Apart from these two options, the user can further modify the binding site to meet their individual requirements. Seed Types Figure 1: Interactions between two miRNAs and their binding sites with different types of seeds.

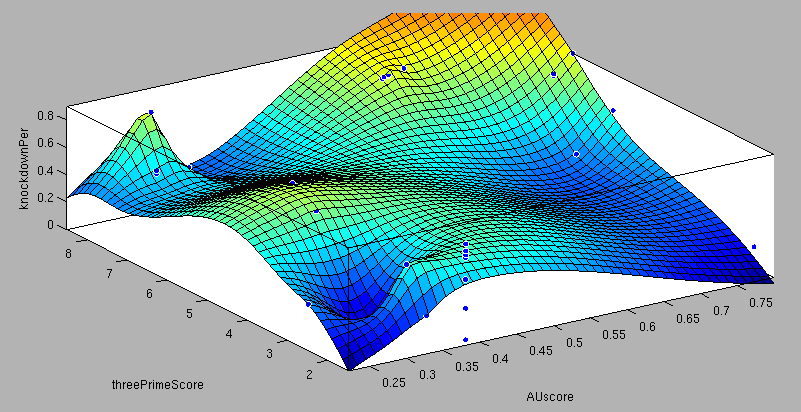

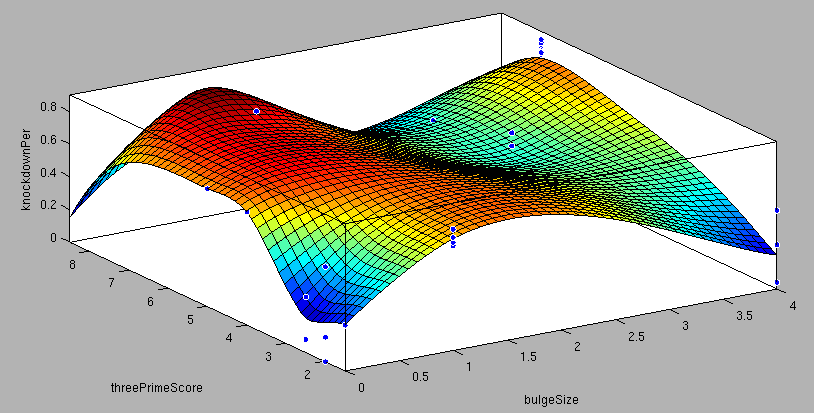

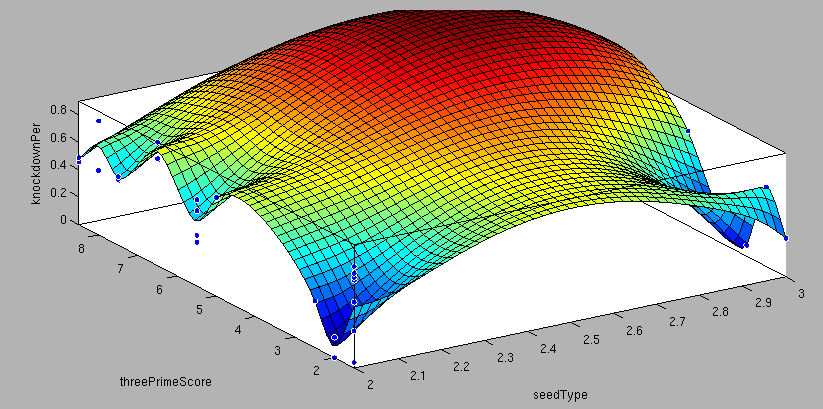

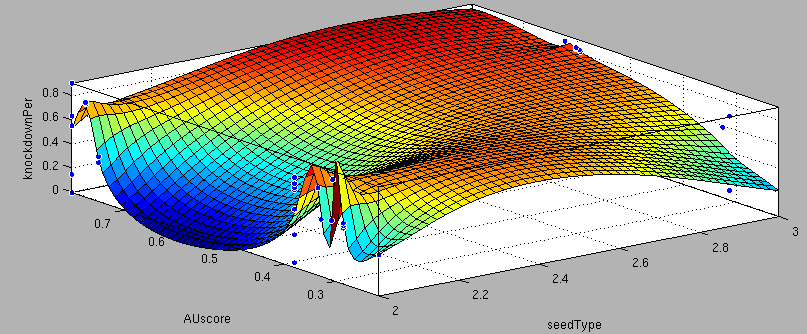

- 6mer (abundance 21.5%): only the nucleotides 2-7 of the miRNA match with the mRNA. - 7merA1 (abundance 15.1%): the nucleotides 2-7 match with the mRNA, and there is an adenine in position 1. - 7merm8 (abundance 25%): the nucleotides 2-8 match with the mRNA. - 8mer (abundance 19.8%): the nucleotides 2-8 match with the mRNA and there is an adenine in position 1. - Apart from any of these options, the user can decide to create a customized seed with one mismatch included. By inputting a number (between 2-7) in the Customized mismatch position textbox The percentages of abundance are calculated among conserved mammalian sites for a highly conserved miRNA (Bartel (2009)). Supplementary RegionIn miBS designer, the user can choose among several types of supplementary regions, starting with 3 matching nucleotides (14-16), increasing sequentially until 8 (13-20), and then total matching (from 13-22, leaving a bulge)(Grimson A et al(2007)). In case the user needs some other specific supplementary region, he can customize the sequence by inputting the desired matching nucleotides (in numbers from 9 to 22, separated by commas). AU ContentIn order to allow the user to improve the efficiency of their binding sites, miBS designer offers options to increase the AU content by adding adenine or uracil to positions around the matches (specifically in -1, 0, 1, 8, 9 and 10). The function is designed so that it varies the AU content without introducing new pairings. Sticky EndsTo facilitate the task of introducing the binding site into a plasmid, the user can add sequences to both ends of the binding site. Initially, the user can choose among the RFC-12 standard for biobricks BB2, the XmaI/XhoI restriction enzymes used in our miTuner-construct, or some custom sequences input by the user. In the last case, the output sequences will not be directly ready for cloning: the user has to either digest the construction prior to ligation, or to process the primers before ordering them to remove the extra nucleotides and create the overhangs. OutputmiBS designer generates the primer needed to integrate the binding site desired into a plasmid, alongside with the primer for the complementary strand. It will also produce specific names for the two primers. mUTINGIt is a tool developed to generate binding sites for miRNAs that could be used for tissue targeting based on both on- as well as off-targeting strategy. It takes as input the target and off-target tissues as well as the desired targeting strategy. User can also specify a threshold for difference in the level of relative expression (within a tissue) of miRNAs between target and off-target tissue. The program searches through a database of expression levels to give out a list of possible miRNAs which could be used. Out of these, the desired miRNA can be selected for which the final output is generated in the form of sense and anti-sense oligomers with overhangs that could be used to put binding sites in tandem or into a vector. InputThe input for the tool is rather simple and consists of five fields. Organism – The tool lets you choose between Human, Rat and Mouse as the source organism. Target – From a list of tissues, the target (tissue where gene has to be expressed) can be selected. Off-target – A list from which multiple off-targets can be selected is available. Here, the tissues from which gene expression has to be excluded can be included. Targeting – This options lets you select the targeting strategy you want to employ. Threshold – The threshold for difference in the level of relative expression of miRNA in the target and off-target tissue can be set here. The default value is 0.001. DataThe expression data and sequence data that the tool makes use of was recruited from preexisting data sources. Sequences – mature miRNA sequences were obtained from mirBase Sequence Database Release 16 (Griffiths-Jones S. et al.(2008)). Expression profiles - miRNA expression profiles were collected from a previously published resource of 172 human, 64 mouse and 16 rat small RNA libraries extracted from major organs and cell types (Landgraf et al.(2007)). The expression values in the data represent the number of cloned mature microRNAs that were sequenced in each library and reported as clone counts. The counts are normalized by the total number of microRNAs that were cloned in each library. These values are then used to calculate the difference in relative miRNA levels for differential expression of the construct. ProcessingThe processing of the data has been done by script written in PERL. After submitting the primary inputs, mentioned above, the tool gives the user a choice of different miRNAs that fulfill the criterion set in the input. These are displayed along with the miRNA expression values in the target (in case of off-targeting) or in the off-targets (in case of on-targeting). The expression values in the off-targets and target in the respective cases are required to be zero. Based on these values, the user can select the most suitable miRNA for their construct. OutputThe final output is the binding site for the miRNA selected by the user. It consists of the sense strand and the anti-sense strand that would code the binding site. These are flanked by a spacer sequence that could be used for putting binding sites in tandem and for introducing cloning sites. ModelingThe Neural Network and the Fuzzy Logic Model explained here are the basis of the miRockdown tool. The results of the optimized models are integrated as a database and enable the miRockdown output of binding sites, to have confidently predicted protein knockdown efficiency. Parameterization ConceptOne of the hardest tasks in the development of our models was to come up with good strategy to generate input parameters from the raw data. In our case, the raw data is the binding site sequence and the corresponding sh/miRNA-sequence. The final parameterization concept unites a basic distinction between perfect, bulged (near-perfect) and endogenous miRNA like BS, with the advanced 3'-scoring and AU-content evaluation. The endogenous miRNA like BS parameter is further split into the three seed-types. The targetscan_scores_50-algorithm (Rodriguez et al., 2007) was used to characterize binding sites in respect to 3'-pairing and AU-content score. TargetScan aligns the miRNA with the mRNA sequence starting from a given seed-position in a way the highest possible 3'-score is reached. Binding from miRNA nucleotide 13-16 will add 1 to the score, pairings outside this region add 0.5. Offsets between bound miRNA and mRNA are also allowed, but will there is a penalty of 0.5 points for an offset higher than 2 nucleotides. The AU-content of 30 nucleotides upstream and downstream of the mRNA seed sequence is rated seed type dependent. The impact of the nucleotides decreases with the distance from the seed. The scoring system is based on a regressions applied to datasets from human, mouse, rat and dog mRNA knockdown (Grimson et al., 2007). Since all major prior modeling approaches used mRNA levels as training-set [], our approach needs to will give a completely new insight into miRNA binding site functionality. These surface fits show the correlation of increasing 3' Binding Score and AU content Score with increasing knockdown-efficiency of the binding sites.

Neural Network ModelNeural Network theoryArtificial Neural Network usually called (NN), is a computational model that is inspired by the biological nervous system. The network is composed of simple elements called artificial neurons that are interconnected and operate in parallel. In most cases the NN is an adaptive system that can change its structure depending on the internal or and external information that flows into the network during the learning process. The NN can be trained to perform a particular function by adjusting the values of the connection, called weights, between the artificial neurons. Neural Networks have been employed to perform complex functions in various fields, including pattern recognition, identification, classification, speech, vision, and control systems.

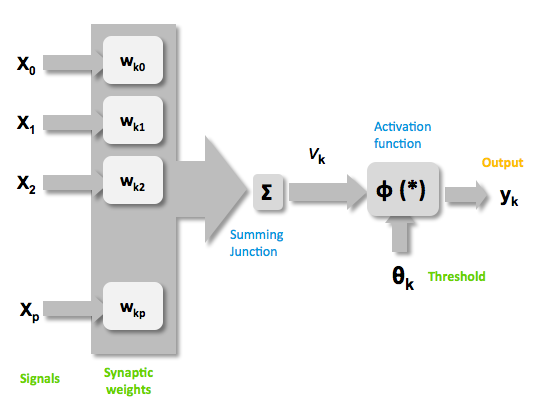

Mathematically there are three basic components that describes a single layer network: the synapses of the artificial neurons that are modeled as weights and that represent how strong is the connection between the input and an artificial neuron. An adder, that sum up all the the weighted inputs and finally an activation function, that controls the amplitude of the output of the single layer. Generally there are three type of activation function: threshold, sigmoid, piecewise linear function. For our model the sigmoid function has been used, it can range the output between 0 and 1 or between -1 and 1 (Kröse et al, 1996).  Figure 2: representation of the mathematical model of a biological neuron.

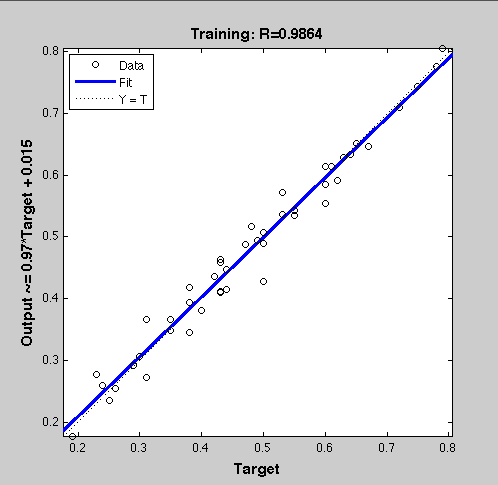

Figure 3: Training of a Neural Network. Model descriptionInput/target pairsThe NN model has been created with the MATLAB NN-toolbox. The input/target pairs used to train the network comprise experimental and literature data (Bartel et al., 2007). The experimental data were obtained by measuring via luciferase assay the strength of knockdown due to the interaction between the shRNA and the binding site situated on the 3’UTR of luciferase gene (miTuner). Nearly 30 different rational designed binding sites were tested and the respective knockdown strength calculated. Characteristic of the NetworkThe neural network comprised two layers (multilayer feedforward Network). The first layer is connected with the input network and it comprised 15 artificial neurons. The second layer is connected to the first one and it produced the output. For the first and the second layer a sigmoid activation function and a linear activation function were used respectively. The algorithm used for minimizing the cost function (sum squared error) was Bayesian regularization. This Bayesian regularization takes place within the Levenberg-Marquardt algorithm. The algorithm updates the weight and bias values according to Levenberg-Marquardt optimization and overcomes the problem in interpolating noisy data, (MacKay, 1992) by applying a Bayesian framework to the NN learning problem.

ResultsTwo experiment batches were performed. The network trained only with data coming from literature was used to predict the outcome of the first experiment batch. In Figure 4 is showed the regression line of the correlation between the NN outputs and the targets used for training this network while in Table 1 the simulated and experimental percentage of knockdown are showed. It becomes clear by looking the results that the bulge size has indeed an effect on the knockdown percentage, in fact the network is able to simulate with high precision when the bulge size is on the range of 3 and 4 nt, but not when it becomes 1 or 0. It is important to underly here that the network was trained with literature values that did not take into consideration the bulge size as a key factor, TargetScan in fact, does not evaluate this binding site feature in the scoring process.

Brief conclusionThe bulge size was identified as a very important parameter for knockdown efficiency. This led us to the conclusion of training another Neural Network only with our experimental data and encompassing the bulge size in the input vector.

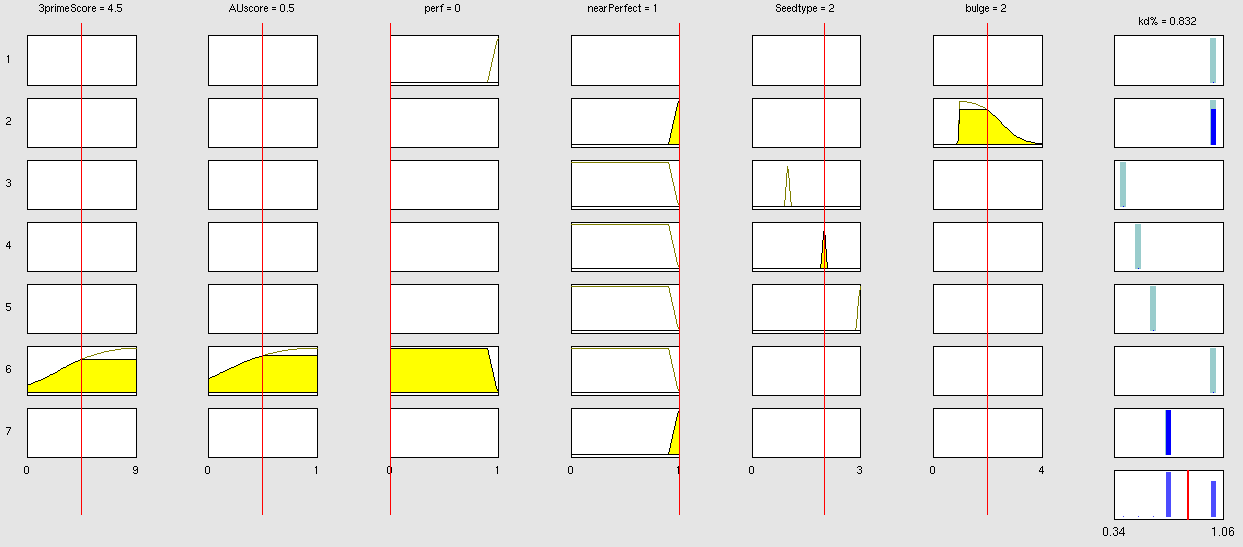

Simulation and experimental verificationFuzzy Logic ModelWhy using a fuzzy inference system to model binding site efficiency?To be able to evaluate the complex features of an shRNA or miRNA binding site and predict a resulting knockdown percentage of the protein we developed a fuzzy inference system (fis). The parameterized properties of the binding sites serve as input and will be processed into the knockdown percentage as the single output. Thus our fuzzy inference system is characterized as a multiple input, single output fuzzy inference system (MISO). Fuzzy Logic is a rule-based approximate artificial reasoning method developed by Lotfi Zadeh in 1965. Its motivation is the observation that humans often think and communicate in a vague way, and yet can make precise decisions (Nelles O (2000)). It has been widely used in engineering and Artificial Intelligence approaches such as Fuzzy Controllers and Fuzzy Expert Systems. Fuzzy Logic has also been used for the modeling of biological pathways (Bosl et al (2007)) and to analyze gene regulatory networks (Laschov et al(2009)). Key advantages of Fuzzy logic-based approaches are (i) the ability to construct models based on prior knowledge of the system and experimental data and (ii) encode intermediate states for inputs and outputs, thus improving other logic-approaches that can only deal with ON/OFF states such as Boolean models (Aldridge et al (2009)) and (iii) simulations can be derived from both qualitative and quantitative data, both of which can be cast into the form of IF-THEN rules. Thus, FL constitutes a powerful approach for the understanding of heterogeneous datasets. Fuzzy inference systems are based on membership functions (MF). MFs rate input parameters how much they satisfy a criterion on a scale from 0 to 1. There can be one, or multiple MFs per input parameter. Like different criteria applied to an input. The height of persons for example can be evaluated with one MF - how much the person satisfies being tall. On the other hand, there could be 3 MFs, one evaluating the membership to small people, the second to medium sized people and the third one to big people. Changing the shape of the MF gives the opportunity to have either functional dependencies, allowing intermediate states of the membership values, or simple ON/OFF states, where the membership value can be only 0 or 1. Thus different kinds of input parameters can be evaluated with a fuzzy inference system. For the simple height example model the age of the person could be taken as second input and evaluated by a MF that is 0 until the age of 18 and 1 for older persons. Thus the model could differentiate between young and grown-up persons. Simple if-then rules can then be used to combine the input MF to an output MF. The satisfaction of a rule by an object (set of input parameters) is defined by the degree of membership of the object to the different MFs. The higher the satisfaction of the rule, the higher is the membership to the output MF. The output MF can be a function like the input MF. This is the case in Mamdani method fuzzy inference systems (Mamdani et al, (1975)). We are using a Sugeno method fuzzy inference system (Sugeno(1985)), where the output MF is either a constant or a linear function depending on input parameters. The advantage of a Sugeno fuzzy inference system is, that it is computationally more efficient and easier to optimize or adapt due to the more simple output MF. Due to the non-intuitive combination of the 3'-pairing- and AU-content score, our fuzzy inference system needs to be optimized computationally.

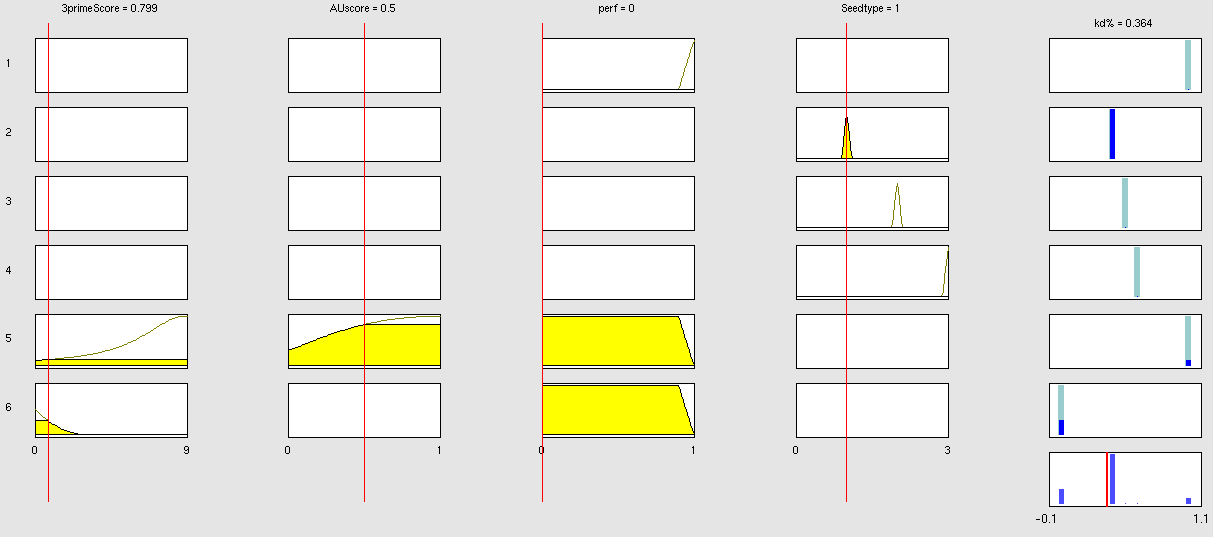

Optimizable Extendable Fuzzy Model Concepts Consider low 3' score concept: This model concept takes into consideration, that binding sites with a 3'-score under 3 did not show a significant change in knockdown efficiency compared to a control with only seed pairing (Grimson et al., 2007). This is realized by rule number 6. Strength: general prediction, no dependency on conditions. Assured by [normalization strategy] based on previous knowledge (Bartel(2009)) Our fuzzy inference system can deal with 3 different kinds of shRNA binding sites. Perfect, bulged and endogenous-like binding sites are treated separately, due to the differences in their biological mechanism, as discussed earlier [link to binding site properties]. A perfect binding site is evaluated by a simple ON/OFF input MF evaluating the boolean input of We came up with different concepts of what kind of input parameters to integrate into the fuzzy inference model and how to evaluate them. Therefore we parameterized the properties of a large set of binding sites according to various different BS characteristics. The targetscan_50_context_scores – Algorithm (Rodriguez et al., 2007) which evaluates binding sites in respect to 3'-pairing and AU-content gives out a score that seems appropriate to distinguish especially between endogenous miRNA like binding sites. A more detailed description on the concept of binding site parameterization can be found under Model Training Set. Input parameters Input membership functions Output membership functions Rules

Parameters and their functionality Output Membership function values 7merA1 7merM8 8mer (Nearperfect) (Perfect)

Fuzzy Model OptimizationConnection of Fuzzy Logic Toolbox and Global Optimization Toolbox via script. ResultClick here, if you are interested in more recent model optimizations results! Data OverviewReferences- Bartel D.P., MicroRNAs: Target Recognition and Regulatory Functions, Cell(136):215-233(2009) - Grimson A, Farh KHF, Johnston WK, Garrett-Engele P, Lim LP, Bartel DP, MicroRNA Targeting Specificity in Mammals: Determinants beyond Seed Pairing, Molecular Cell(27):91-105(2007). - Laschov D, Margaliot M. Mathematical modeling of the lambda switch:a fuzzy logic approach. J Theor Biol. 21:475-89 (2009). - Mamdani, E.H. and S. Assilian, An experiment in linguistic synthesis with a fuzzy logic controller. International Journal of Man-Machine Studies, 7(1):1-13, (1975). - Bosl W. J. Systems biology by the rules: hybrid intelligent systems for pathway modeling and discovery. BMC Systems Biology 1:13 (2007). - Sugeno, M., Industrial applications of fuzzy control, Elsevier Science Pub. Co.,(1985). - Nelles O. Nonlinear System Identification Springer Verlag GmbH & Co., Berlin, (2000). - targetscan_50_context_scores.pl Rodriguez J, Ge R, Walker K, and Bell G., Whitehead Institute for Biomedical Research. (2007,2008) - Kröse B & van der Smagt P, An introduction to Neural Networks, 8th Ed, (1996). - Aldridge B. B., Saez-Rodriguez J., Muhlich J. L., Sorger P. K., Lauffenburger D. A. Fuzzy logic analysis of kinase pathway crosstalk in TNF/EGF/insulin-induced signaling PLoS Comput Biol.5:e1000340 (2009). - MacKay D.J.C., A Practical Bayesian Framework for Backpropagation Networks, Neural Computation, 4(3):448-472(1992) - Landgraf P., Rusu M., Sheridan R., Sewer A., Iovino N., Aravin A., Pfeffer S., Rice A., Kamphorst A.O., Landthaler M., Lin C., Socci N.D., Hermida L., Fulci V., Chiaretti S., Foa R., Schliwka J., Fuchs U., Novosel A., Muller R.U., Schermer B., Bissels U., Inman J., Phan Q., Chien M., A mammalian microRNA expression atlas based on small RNA library sequencing, Cell. 129:1401-1414 (2007). - Griffiths-Jones S., Saini H.K., van Dongen S., Enright A.J. miRBase: tools for microRNA genomics. Nucleic Acid Research. 36:D154-D158 (2008).

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

"

"